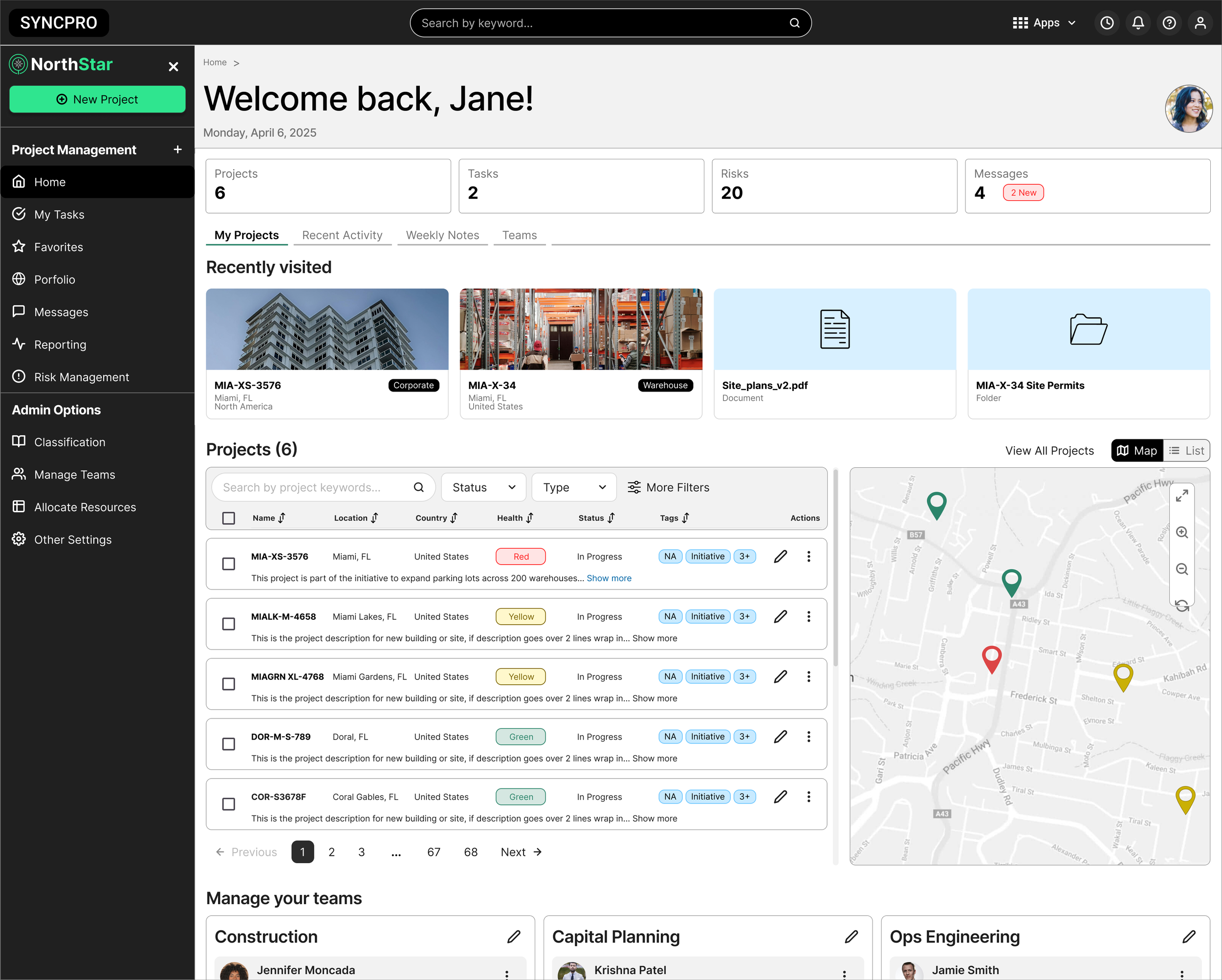

Unifying program management for distributed teams

A 0→1 enterprise platform designed to replace fragmented workflows with a more scalable system for tracking work, sharing updates, and communicating project health.

Role: Product Designer

Timeline: 2024-2026

Platform: Web App

🔒For NDA confidentiality purposes

Original product imagery has been omitted.

This case study uses generalized language, and mock data to protect proprietary information while preserving the design process and outcomes.

My Role

As the product designer on a 0→1 enterprise program management platform, I led the design process from discovery through launch, helping shape a unified system for managing projects, updates, and reporting across multiple personas.

✅ I conducted research

✅ Co-led workshops with product managers

✅ Mapped user journeys & workflows

✅ Created wireframes

✅ Validated concepts with users

✅ Partnered with product/engineering tech means

✅ Helped shape the MVP scope under evolving priorities

UX RESEARCH

KPI METRICS

USABILITY TESTING

USER JOURNEY

The Result

A more scalable platform that improved reporting consistency, increased visibility into project health, and helped drive CSAT from 3.5/5 to 4.38/5 within one quarter, while adoption grew from 1,000 to 4,000 users within two quarters.

0→1

MVP Launched

in 12 months

4.38/5

Avg. Customer Satisfaction

Score within the first year.

1k → 4K

Monthly Active Users

Within two quarters post launch

The Challenge

Teams across regions had no shared system for managing projects, tracking progress, or reporting status to leadership. Over time, everyone built their own process — and it showed.

The result was a fragmented environment where the same update lived in three different places, no two teams spoke the same language, and leadership had no reliable way to see what was actually happening across the organization.

The challenge wasn't just to build a better tool. It was to design a system that multiple personas could actually adopt — one that reduced the operational overhead without requiring everyone to change how they worked overnight.

Pain Points

Manual repetitive updates

Teams copied the same status across multiple tools with no single source of truth, creating unnecessary rework every cycle.

Terminology mismatches

Different teams used different language for the same work, making unified reporting to leadership inconsistent and unreliable

No dependency visibility

When one team's work unblocked another, there was no way to know. People found out by asking around or missing deadlines.

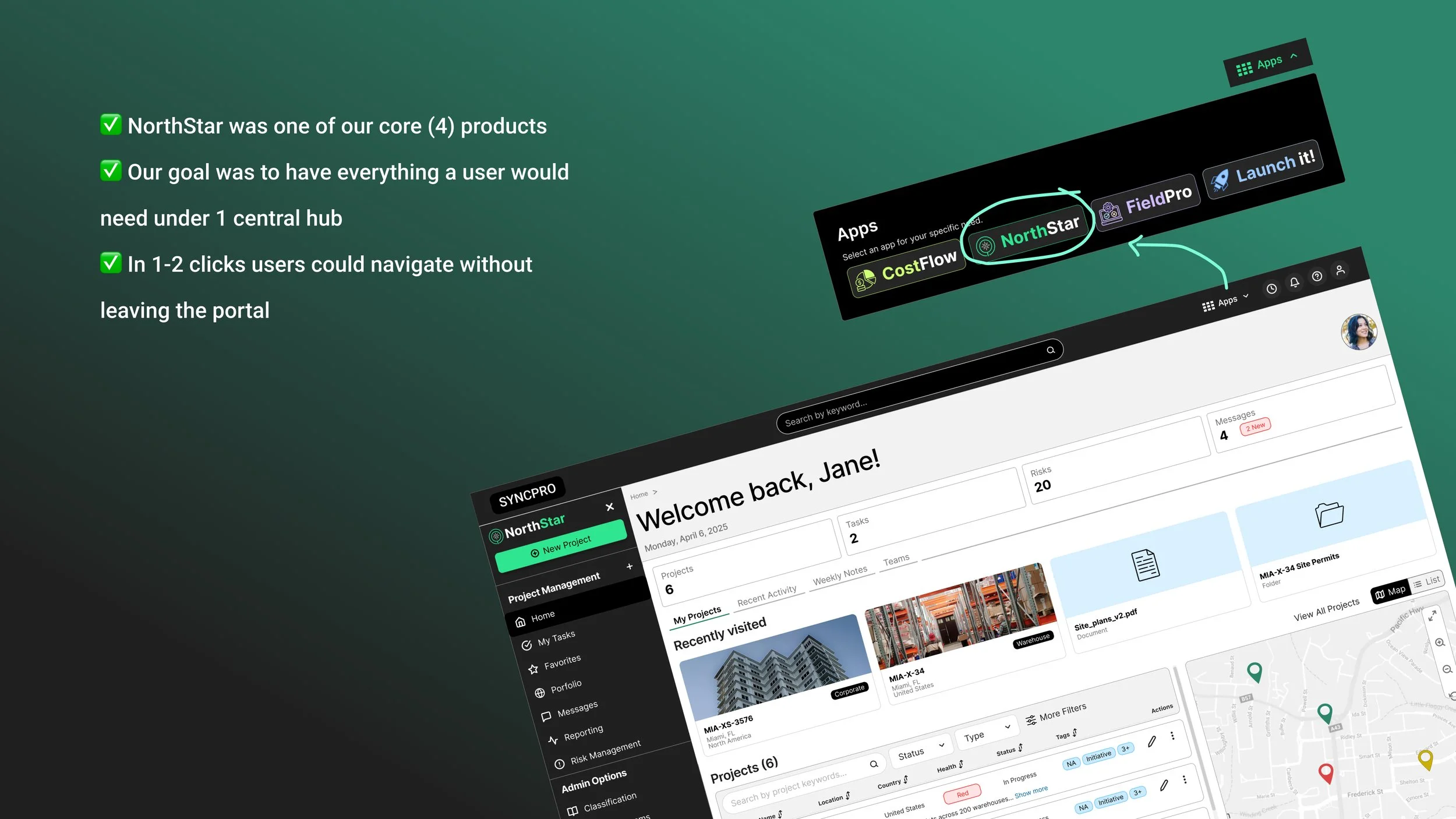

Disconnected tools

The average user toggled between several platforms to complete one workflow, creating friction and scattered, inconsistent data.

Framing user needs through

Jobs to Be Done (JTBD)

To better understand what users were ultimately trying to accomplish, I mapped their needs through a Jobs to Be Done framework. This helped shift the focus away from features and toward the real outcomes users needed to achieve across planning, coordination, and execution.

What is JTBD?

Jobs to Be Done (JTBD)is a framework for understanding what people are really trying to accomplish, independent of any specific tool or feature. Instead of focusing on the product itself, it helps define the underlying outcome a user needs to achieve and what success looks like from their perspective.

Persona

The primary user group of the app.

Visualizing The User Journey

Identifying where to focus on

The opportunity was to create a unified system that improved visibility, reduced friction, and still supported the needs of different users.

Opportunities

Unify fragmented workflows

How might we bring disconnected project workflows into one scalable platform?

Improve project health visibility

How might we make progress, risk, and momentum easier to understand?

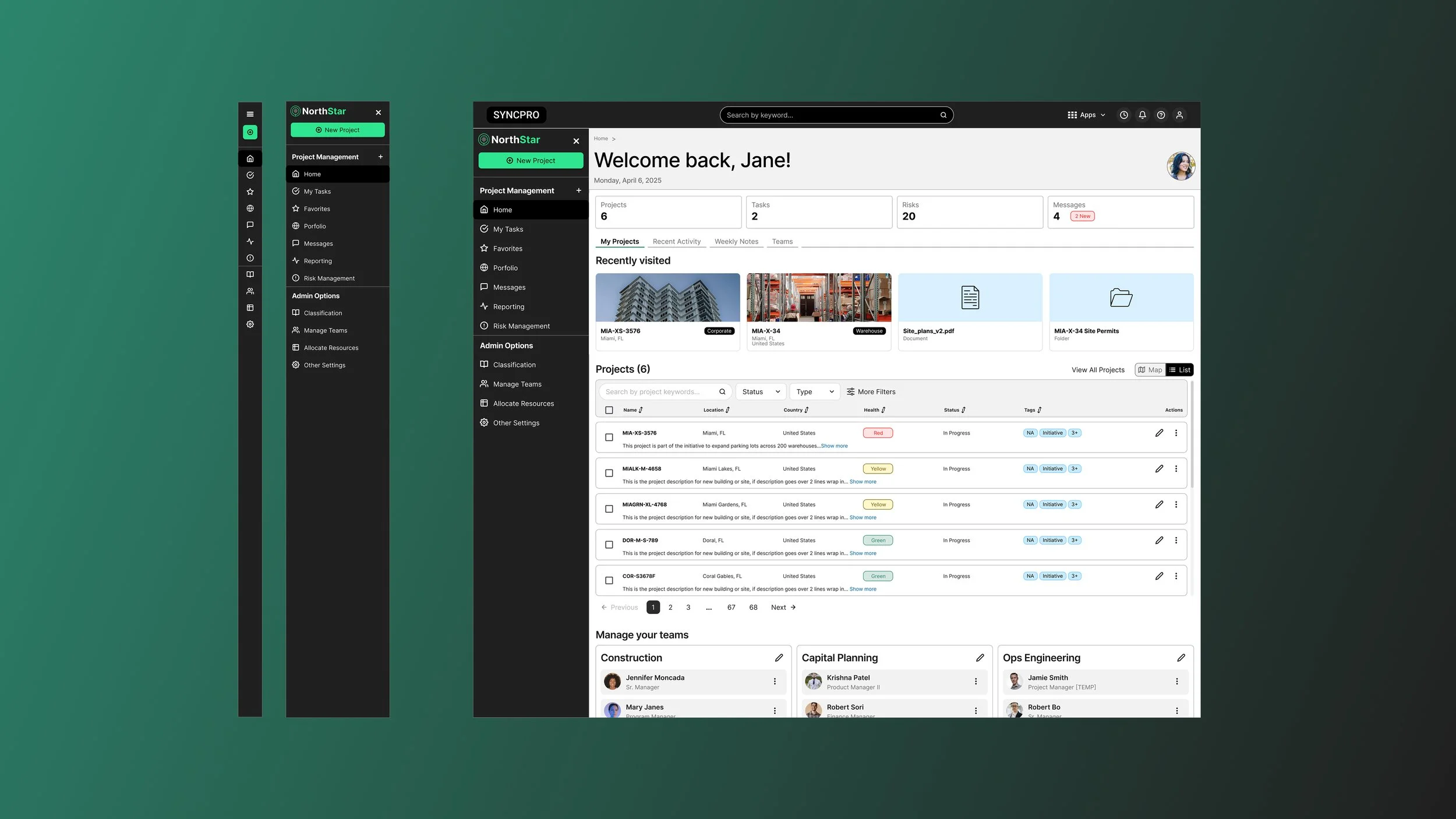

Support multiple personas

How might we balance administrative oversight with day-to-day execution in the same system?

Define the right MVP

How might we focus phase one on the highest-value workflows first?

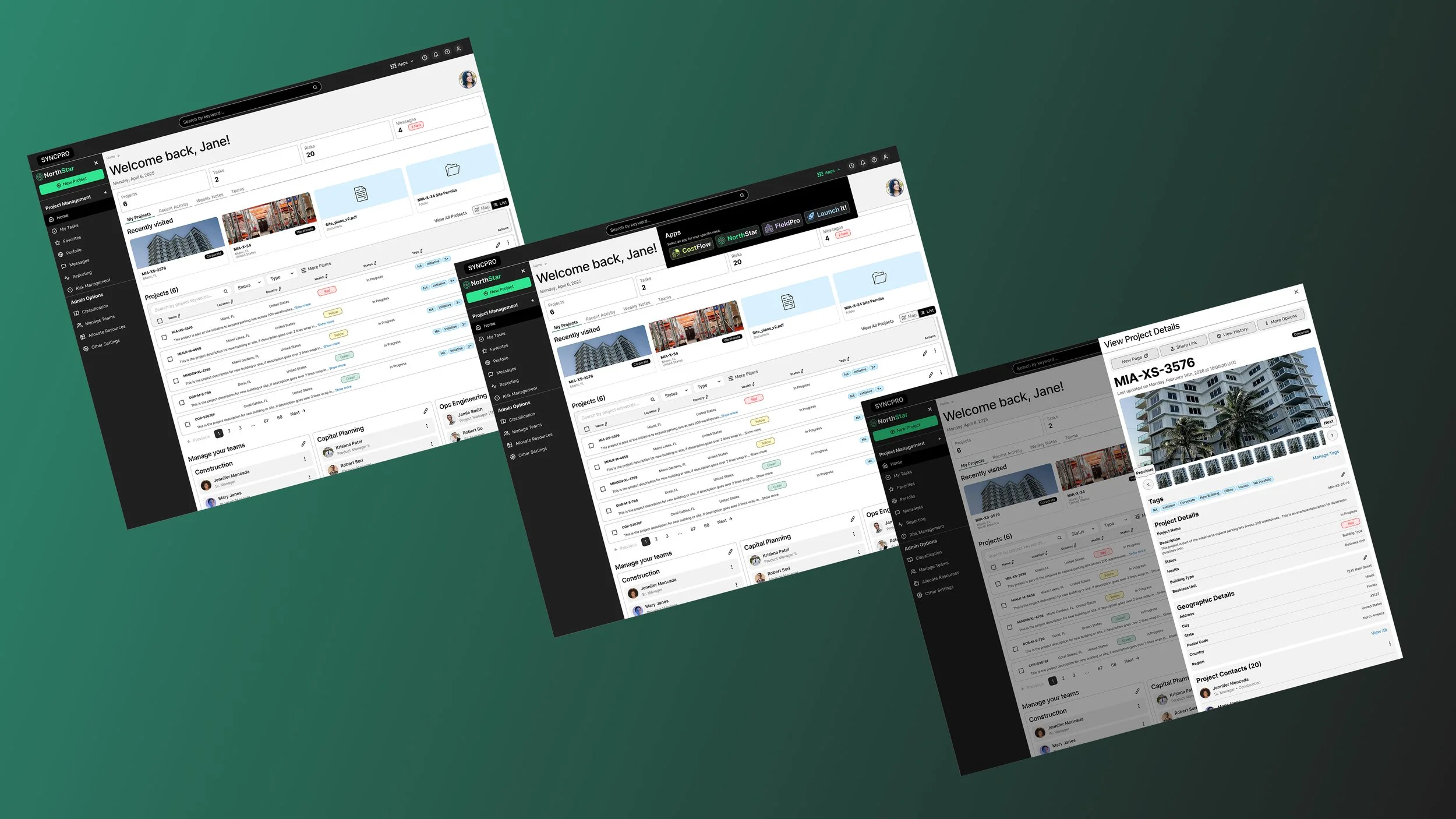

Shaping the MVP

My Approach

Discovery & Research

Led interviews, workshops, and contextual inquiry to understand fragmented workflows, user needs, and operational pain points.

This enabled: a clearer understanding of where the biggest gaps and opportunities existed.

Workflow Mapping & MVP Definition

Mapped the end-to-end journey across personas and helped define the highest-value workflows for phase one.

This enabled: a more focused MVP grounded in real user and business needs.

Concepting & Validation

Created wireframes and prototypes, then validated and refined them with users, product, and engineering.

This enabled: faster alignment and stronger confidence in the solution before launch.

Impact & Iteration

Used feedback and post-launch insights to improve usability, strengthen adoption, and raise CSAT over time.

This enabled: a better product experience that improved satisfaction and scaled more effectively.

Results and Impact

✅ More Consistent Project Workflows

Bringing fragmented tools into one platform created a more structured way for teams to manage projects, share updates, and maintain momentum across workflows.

✅ Stronger Adoption and Onboarding

A more intuitive and scalable experience helped improve onboarding completion by 30% and supported growth from 1,000 to 4,000 users within two quarters.

✅ Clearer Reporting and Project Visibility

Standardized reporting and dashboard patterns made it easier for administrators and leaders to understand progress, risks, and project health with confidence.

✅ Improved Satisfaction Through Iteration

Post-launch feedback loops helped uncover usability gaps early, contributing to a CSAT increase from 3.5/5 to 4.38/5 within one quarter.

We built.

We Measured.

We Learned.

Reflection

Designing Northstar reinforced how complex enterprise products are rarely just about interface design. The real challenge was aligning multiple personas, evolving product direction, and business priorities into one system that could launch realistically and still create long-term value.

This project strengthened my ability to navigate ambiguity, define MVP under pressure, and use research and feedback to guide decisions from discovery through iteration. It also reinforced that some of the most meaningful product improvements happen after launch, when real usage reveals where the experience needs to evolve.